Our picks

Long context models in the enterprise: benchmarks and beyond

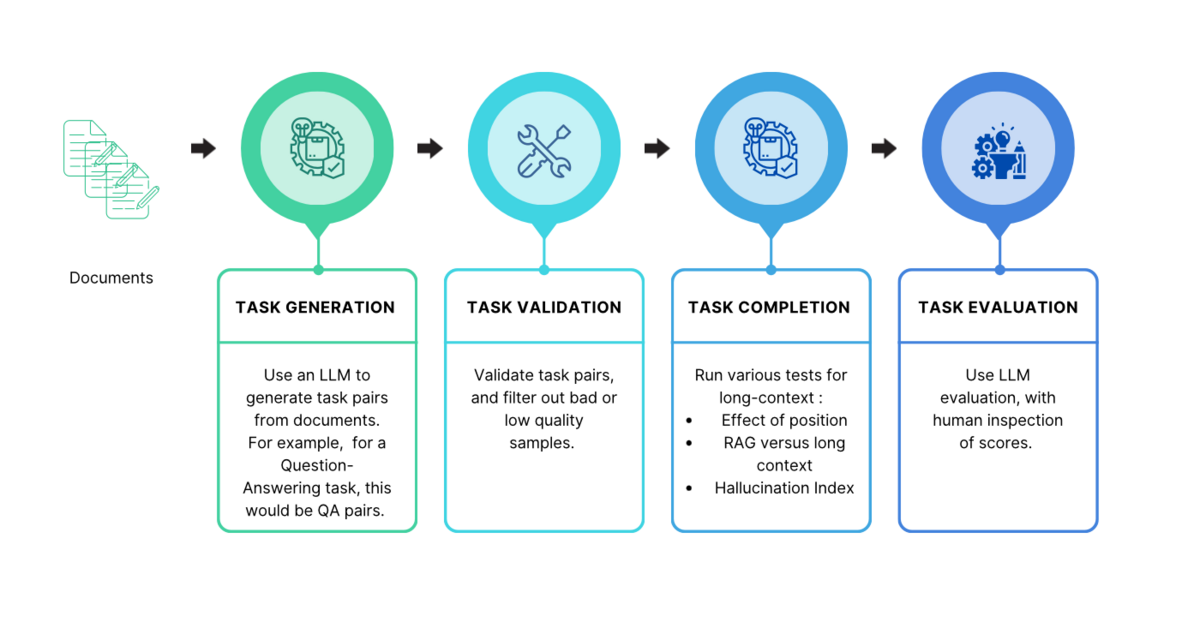

Snorkel researchers devised a new way to evaluate long context models and address their “lost-in-the-middle” challenges with mediod voting.

Snorkel AI researchers present 18 papers at NeurIPS 2023

The Snorkel AI team will present 18 research papers and talks at the 2023 Neural Information Processing Systems (NeurIPS) conference from December 10-16. The Snorkel papers cover a broad range of topics including fairness, semi-supervised learning, large language models (LLMs), and domain-specific models. Snorkel AI is proud of its roots in the research community and endeavors to remain at the forefront…

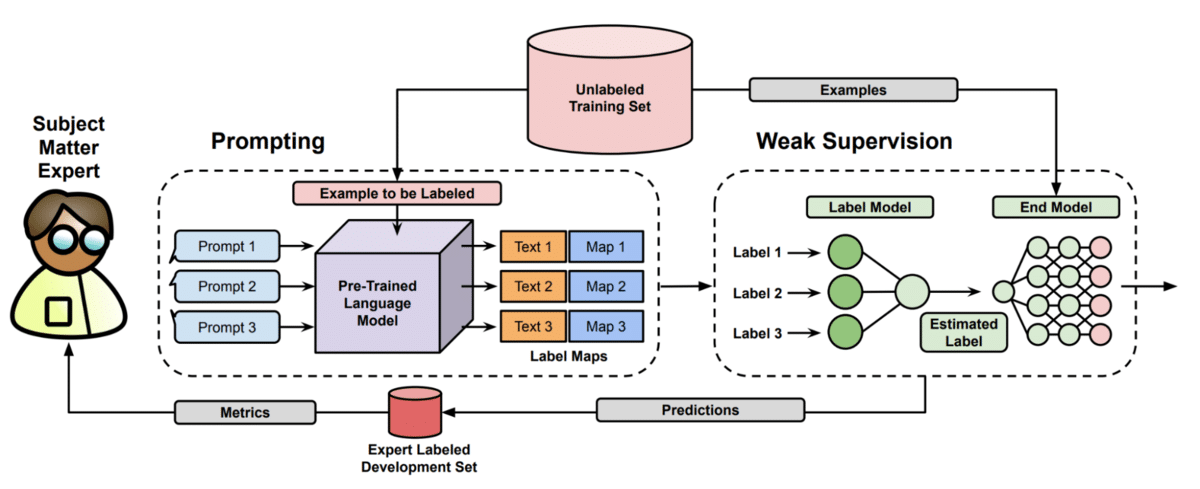

Getting better performance from foundation models (with less data)

Getting better performance from foundation models (with less data)

Recomended for you

Code World Models and AutoHarness for LLM Agents

At our latest Snorkel AI Reading Group, Carter Wendelken of Google DeepMind walked us through two related papers he presented at ICLR: Code World Models for General Game Playing and AutoHarness: Improving LLM Agents by Automatically Synthesizing a Code Harness. Both ask the same question from opposite ends: when you want an LLM to act reliably in a complex, possibly…

Why coding agents need better data, evals, and environments

Coding agents have moved from tab-complete to teammate. They autonomously inspect repositories, edit files, run commands, diagnose failures, and work through multi-step engineering tasks. That creates a harder reliability problem. A model that only suggests code is easy for a human to evaluate. A coding agent refactoring your repository and testing its own changes is much harder to supervise –…

Understanding Olmix: A Framework for Data Mixing Throughout Language Model Development

At our latest Snorkel AI Reading Group, Mayee Chen (Stanford, Hazy Research) stopped by our San Francisco office to walk us through Olmix: A Framework for Data Mixing Throughout LM Development — work she contributed to during her internship at Ai2 on OLMo 3. Olmix tackles one of the messiest, least-documented levers in LLM pre-training: how to set the ratios…

All articles on

Research

Code World Models and AutoHarness for LLM Agents

At our latest Snorkel AI Reading Group, Carter Wendelken of Google DeepMind walked us through two related papers he presented at ICLR: Code World Models for General Game Playing and AutoHarness: Improving LLM Agents by Automatically Synthesizing a Code Harness. Both ask the same question from opposite ends: when you want an LLM to act reliably in a complex, possibly…

Why coding agents need better data, evals, and environments

Coding agents have moved from tab-complete to teammate. They autonomously inspect repositories, edit files, run commands, diagnose failures, and work through multi-step engineering tasks. That creates a harder reliability problem. A model that only suggests code is easy for a human to evaluate. A coding agent refactoring your repository and testing its own changes is much harder to supervise –…

Understanding Olmix: A Framework for Data Mixing Throughout Language Model Development

At our latest Snorkel AI Reading Group, Mayee Chen (Stanford, Hazy Research) stopped by our San Francisco office to walk us through Olmix: A Framework for Data Mixing Throughout LM Development — work she contributed to during her internship at Ai2 on OLMo 3. Olmix tackles one of the messiest, least-documented levers in LLM pre-training: how to set the ratios…

Benchmarks should shape the frontier, not just measure it

Since launching the Open Benchmarks Grants, we’ve received more than 100 applications from academic groups and industry labs spanning a wide range of domains and capabilities. As the best benchmarks drive how the field allocates research effort, the bar for benchmarks has risen as well. Here, we share what’s now table stakes for useful benchmarks, and what separates the ones…

Benchtalks #1: Alex Shaw (Terminal-Bench, Harbor) – Building the Benchmark Factory

To kick off our inaugural Benchtalks, a series dedicated to the researchers building these measurement toolkits, Snorkel AI co-founder Vincent Sunn Chen sat down with Alex Shaw, Founding MTS at Laude Institute and co-creator of Terminal-Bench and Harbor. Highlights More on Terminal-Bench: See the leaderboard and the catalog of tasks at tbench.ai. Explore Harbor: Learn how to scale your agent…

Building FinQA: An Open RL Environment for Financial Reasoning Agents

TL;DR: We built FinQA — a financial question-answering environment with 290 expert-curated questions across 22 public companies, now available on OpenEnv. Agents use MCP tools to discover schemas, write constrained SQL queries, and answer multi-step questions from real SEC 10-K filings. Most open-source models struggle with this kind of multi-step tool use, and even frontier closed-source models, while more accurate,…

How Tool Discipline Let a 4B Model Outsmart a 235B Giant on Financial Tasks

The Snorkel research team collaborated with the rLLM team at UC Berkeley on the Agentica project, using their open-source rLLM framework to fine-tune Qwen3-4B-Instruct-2507, delivering a model that beats Qwen3-235B-A22B on Snorkel AI’s expert-curated financial benchmarks – at 1/60th the size. A full breakdown of the results are published in the rLLM blog here. The key insight? Just focus on…

Coding agents don’t need to be perfect, they need to recover

Error analysis of 8 models on Agentic Coding tasks Successful completion of complex tasks doesn’t come from models being always right. It comes from models being resilient when things go wrong. To get a deeper understanding of model behavior in agentic environments, our team analyzed all of the errors found in the full traces of tasks from our Agentic Coding…

Closing the Evaluation Gap in Agentic AI

Today, AI is marked by a growing asymmetry: the excitement around agentic AI is real — backed by quantitative progress on model cards and genuine leaps forward, especially in coding. But ask individuals or enterprises where they feel ready to deploy agentic automation in high-stakes, domain-specific settings outside of coding… and you will find hesitation. The reason: our ability to…

SlopCodeBench: Measuring Code Erosion as Agents Iterate

SlopCodeBench reveals how AI coding agents degrade code quality over time—measuring “slop,” technical debt, and architectural erosion across iterations.

Introducing the Snorkel Agentic Coding Benchmark

Today, we’re sharing details about the Snorkel Agentic Coding benchmark—a comprehensive evaluation suite designed to test whether agents can handle the full complexity of software engineering work.

2026: The year of environments

We just returned from NeurIPS 2025, and we’re still processing everything we saw. The energy around data-centric AI has never been stronger—and we couldn’t be more grateful to the research community for pushing these ideas forward.

Part V: Future direction and emerging trends

Explores how rubrics support agentic, multi-turn, tool-using, multimodal, and code-generating AI systems, and how they evolve with AI feedback and ensemble evaluation.

The self-critique paradox: Why AI verification fails where it’s needed most

TL;DR: We stress-tested the “generate → criticize → improve” loop on 50 visual reasoning tasks. The results were counterintuitive: self-critique acts as a corrosive agent on high-performance tasks, turning 98% accuracy into 57%. Yet, for tasks where models fail completely, it works like magic. This difficulty-dependent behavior poses a critical, hidden risk for RLFT pipelines. The promise vs. the reality…

A chat with the Terminal-Bench team

Snorkel Chief Scientist Fred Sala and Kobie Crawford chat with the Terminal-Bench team to unpack the design behind Terminal-Bench 2.0 and the new Harbor framework.