Latest posts

LLM-as-a-judge for enterprises: evaluate model alignment at scale

Discover how enterprises can leverage LLM-as-Judge systems to evaluate generative AI outputs at scale, improve model alignment, reduce costs, and tackle challenges like bias and interpretability.

Why GenAI evaluation requires SME-in-the-loop for validation and trust

It’s critical enterprises can trust and rely on GenAI evaluation results, and for that, SME-in-the-loop workflows are needed. In my first blog post on enterprise GenAI evaluation, I discussed the importance of specialized evaluators as a scalable proxy for SMEs. It simply isn’t practical to task SMEs with performing manual evaluations – it can take weeks if not longer, unnecessarily…

Research spotlight: is long chain-of-thought structure all that matters when it comes to LLM reasoning distillation?

We’re taking a look at the research paper, LLMs can easily learn to reason from demonstration (Li et al., 2025), in this week’s community research spotlight. It focuses on how the structure of reasoning traces impacts distillation from models such as DeepSeek R1. What’s the big idea regarding LLM reasoning distillation? The reasoning capabilities of powerful models such as DeepSeek…

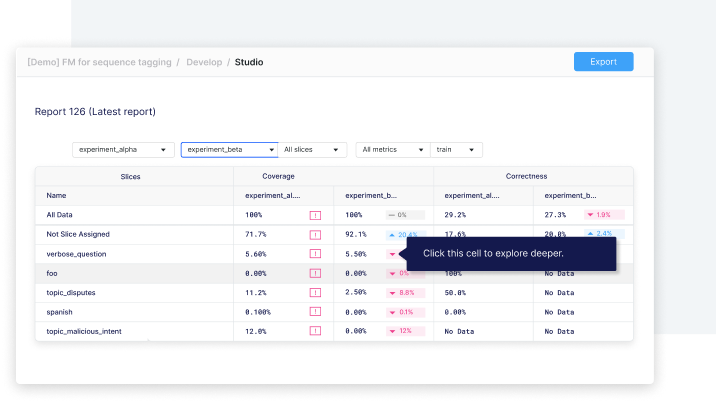

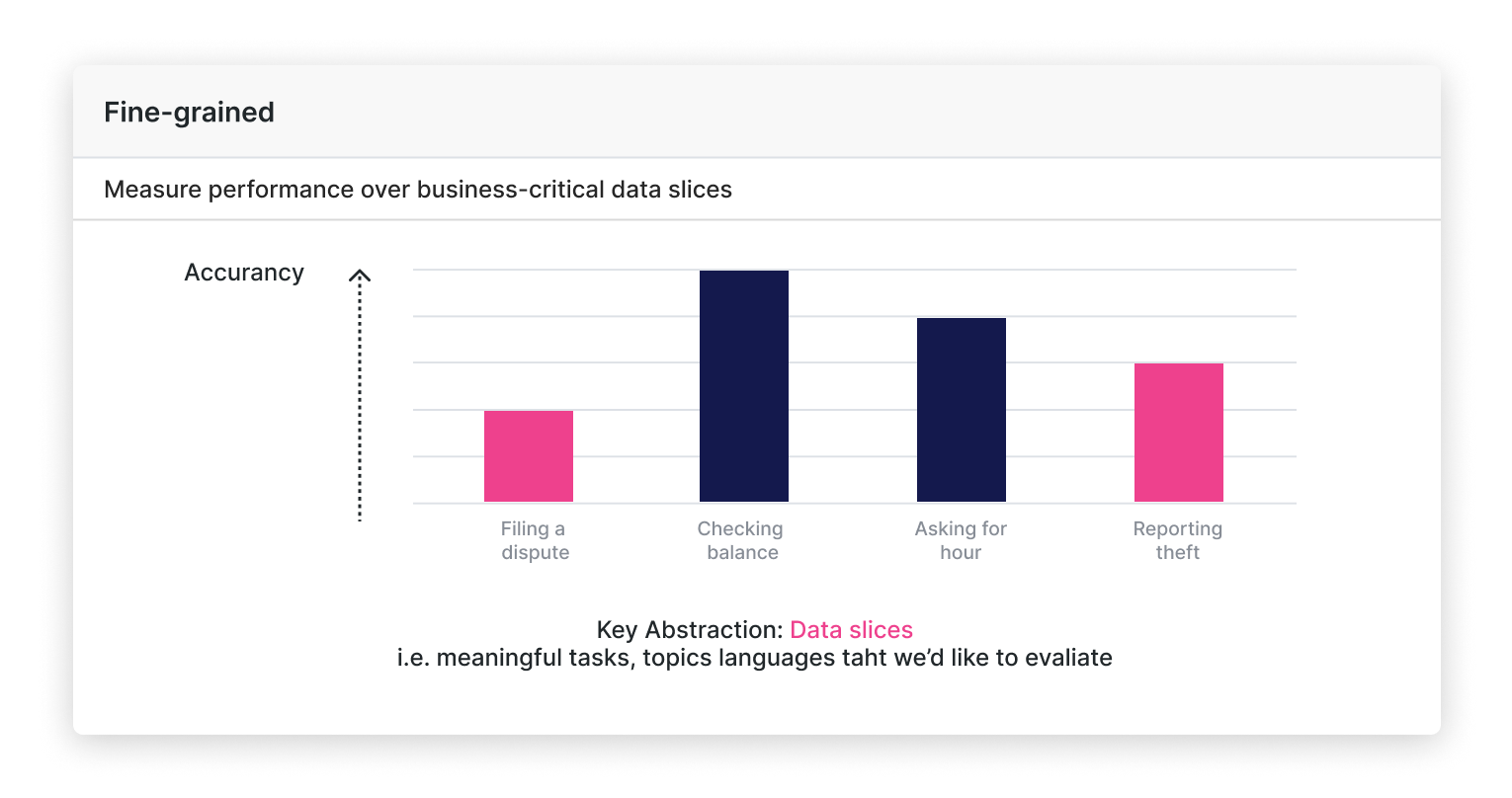

Why enterprise GenAI evaluation requires fine-grained metrics to be insightful

GenAI needs fine-grained evaluation for AI teams to gain actionable insights.

What is specialized GenAI evaluation, and why is it so critical to enterprise AI?

Specialized GenAI evaluation ensures AI assistants meet business requirements, SME expertise, and industry regulations—critical for production-ready AI.

LLM alignment techniques: 4 post-training approaches

Ensure your LLMs align with your values and goals using LLM alignment techniques. Learn how to mitigate risks and optimize performance.

Research spotlight: Is intent analysis the key to unlocking more accurate LLM question answering?

Learn how ARR improves QA accuracy in LLMs through intent analysis, retrieval, and reasoning. Is intent the key to smarter AI? Explore ARR results!

Why enterprises should embrace LLM distillation

Unlock possibilities for your enterprise with LLM distillation. Learn how distilled, task-specific models boost performance and shrink costs.

Retrieval-augmented generation (RAG) failure modes and how to fix them

Discover common RAG failure modes and how to fix them. Learn how to optimize retrieval-augmented generation systems for max business value.

What is large language model (LLM) alignment?

Learn about large language model (LLM) alignment and how it maximizes the effectiveness of AI outputs for organizations.

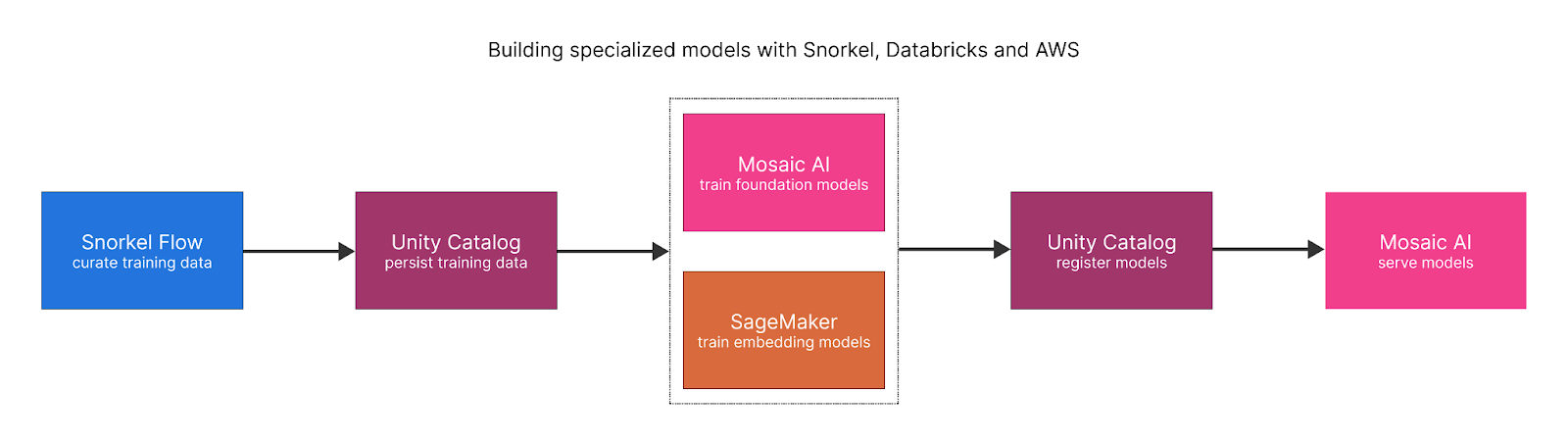

Databricks + Snorkel Flow: integrated, streamlined AI development

Discover the power of integrating Databricks and Snorkel Flow for efficient data ingestion, labeling, model development, and AI deployment.

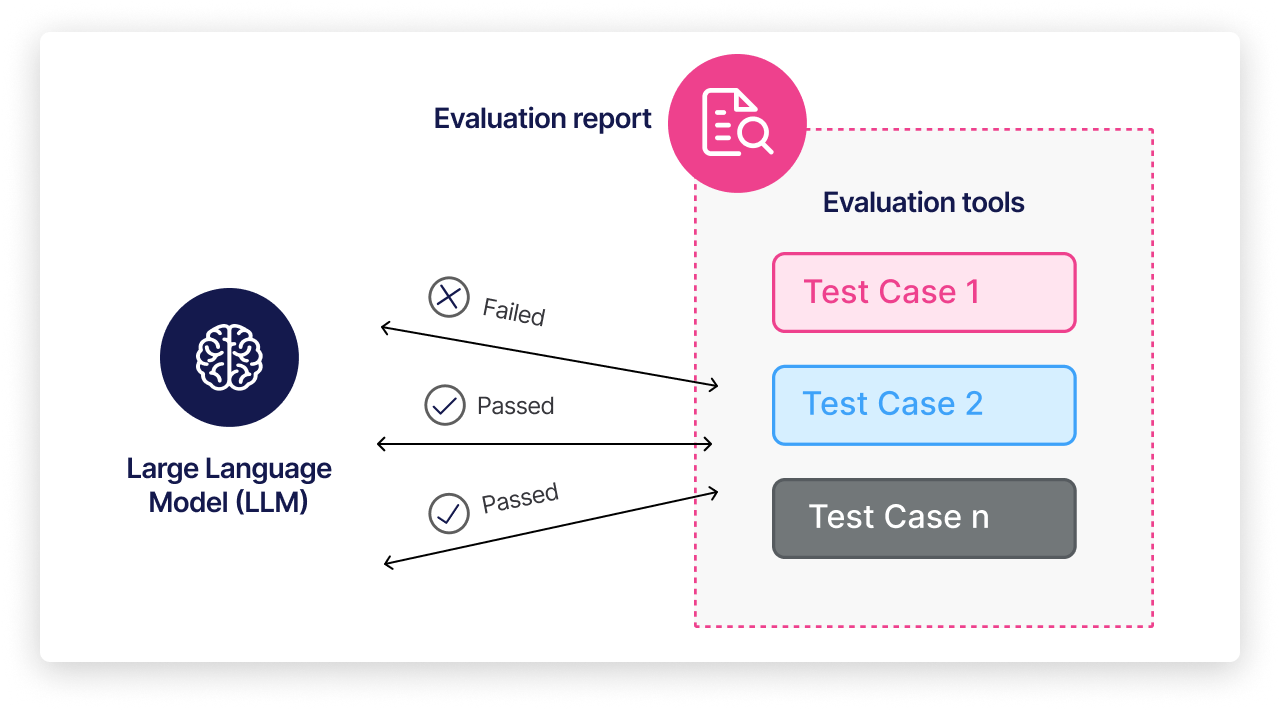

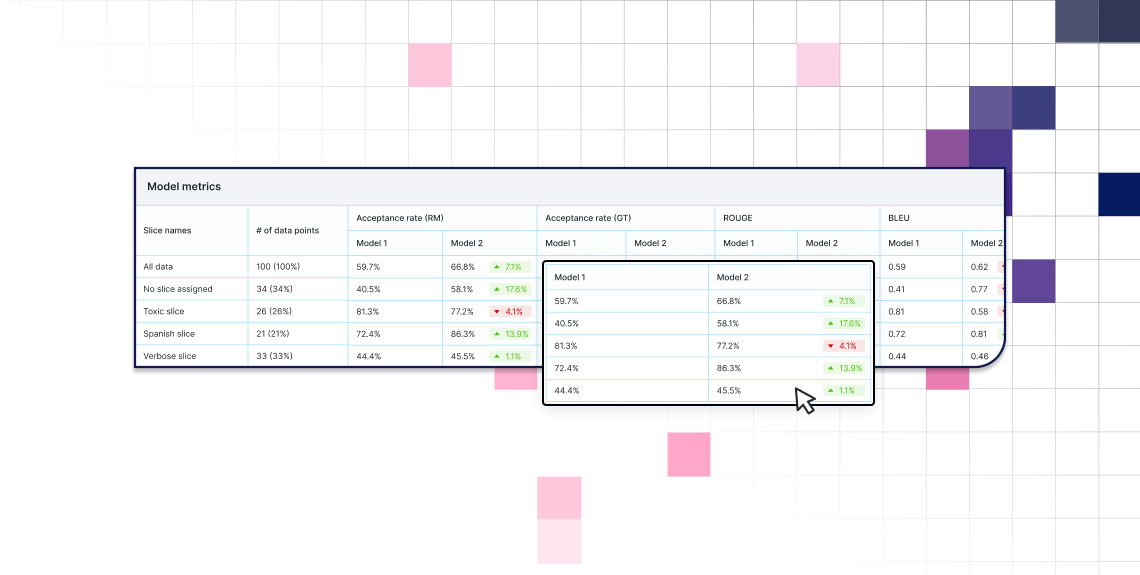

How LLM evaluation drives better models in Snorkel Flow

Discover how Snorkel AI’s methodical workflow can simplify the evaluation of LLM systems. Achieve better model performance in less time.

Unlock proprietary data with Snorkel Flow and Amazon SageMaker

Accelerate LLM development with Snorkel Flow and SageMaker. Automate dataset curation, accelerate training, and gain a competitive advantage.

LLM evaluation in enterprise applications: a new era in ML

Learn about the obstacles faced by data scientists in LLM evaluation and discover effective strategies for overcoming them.

Snorkel AI joins the AWS ISV Accelerate Program and launches Snorkel Flow Availability in AWS Marketplace

Snorkel AI and AWS are partnering to help enterprises build, deploy, and evaluate custom, production-ready AI models. Learn how.

AI data development: a guide for data science projects

What is AI data development? AI data development includes any action taken to convert raw information into a format useful to AI.

SnorkelCon 2024: Inaugural Snorkel AI user conference gathers leaders from 30+ Fortune 500 companies

Discover highlights of Snorkel AI’s first annual SnorkelCon user conference. Explore Snorkel’s programmatic AI data development achievements.

Explore the new GenAI Evaluation Suite: Snorkel 2024.R3

We aim to help our customers get GenAI into production. In our 2024.R3 release, we’ve delivered some exciting GenAI evaluation results.

Snorkel Flow 2024.R3: Supercharge your AI development with enhanced data-centric workflows

Snorkel AI has made building production-ready, high-value enterprise AI applications faster and easier than ever. The 2024.R3 update to our Snorkel Flow AI data development platform streamlines data-centric workflows, from easier-than-ever generative AI evaluation to multi-schema annotation.

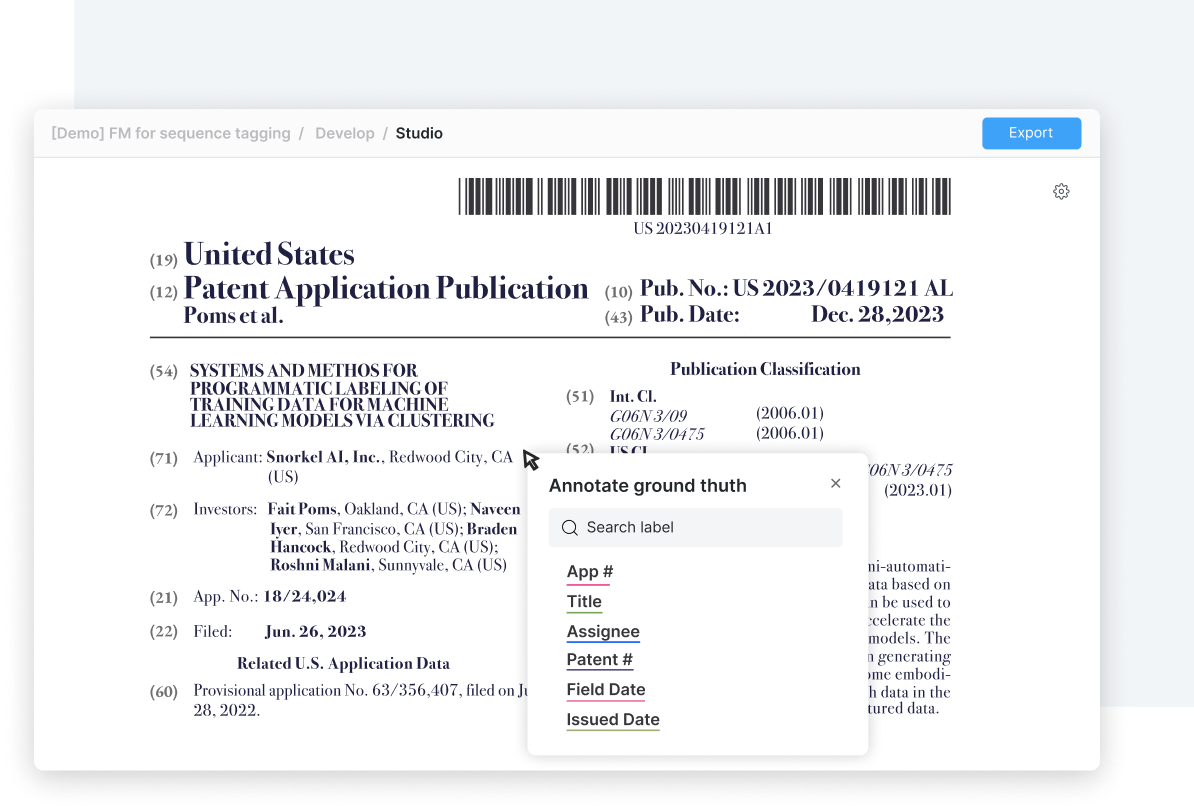

New NLP features in Snorkel Flow 2024.R3

Discover new NLP features in Snorkel Flow\’s 2024.R3 release, including named entity recognition for PDFs + advanced sequence tagging tools.

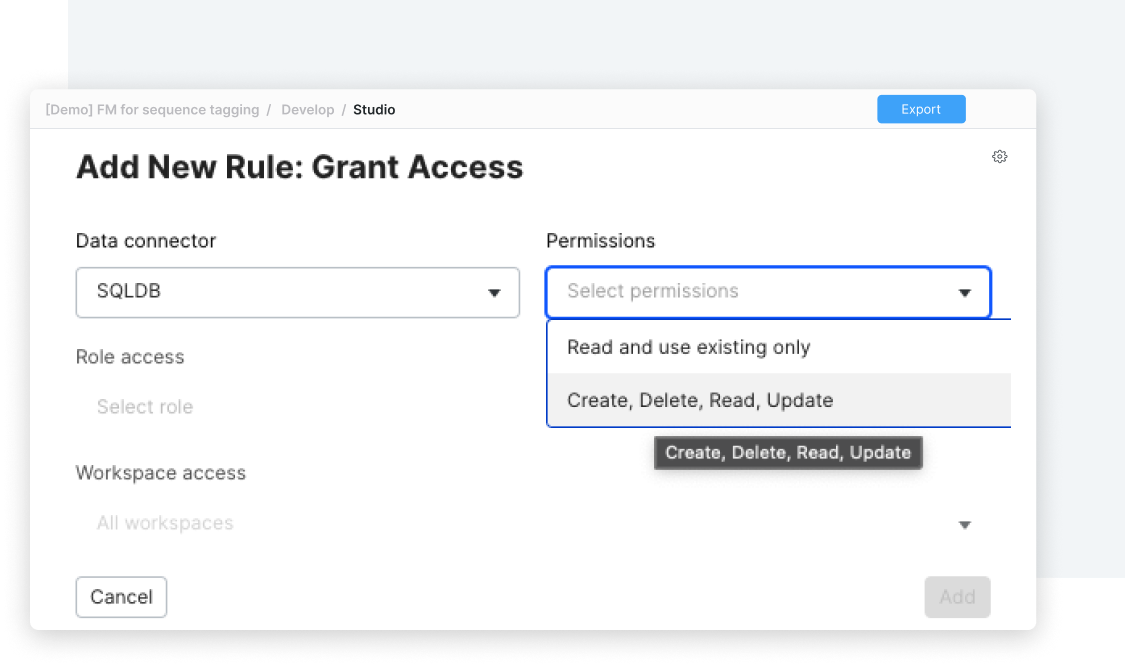

Enterprise data compliance and security review: Snorkel Flow 2024.R3

Discover the latest enterprise readiness features for Snorkel Flow. Configure safeguards for data compliance and security.

How a global financial services company built a specialized AI copilot accurate enough for production

Learn how Snorkel, Databricks, and AWS enabled the team to build and deploy small, specialized, and highly accurate models which met their AI production requirements and strategic goals.

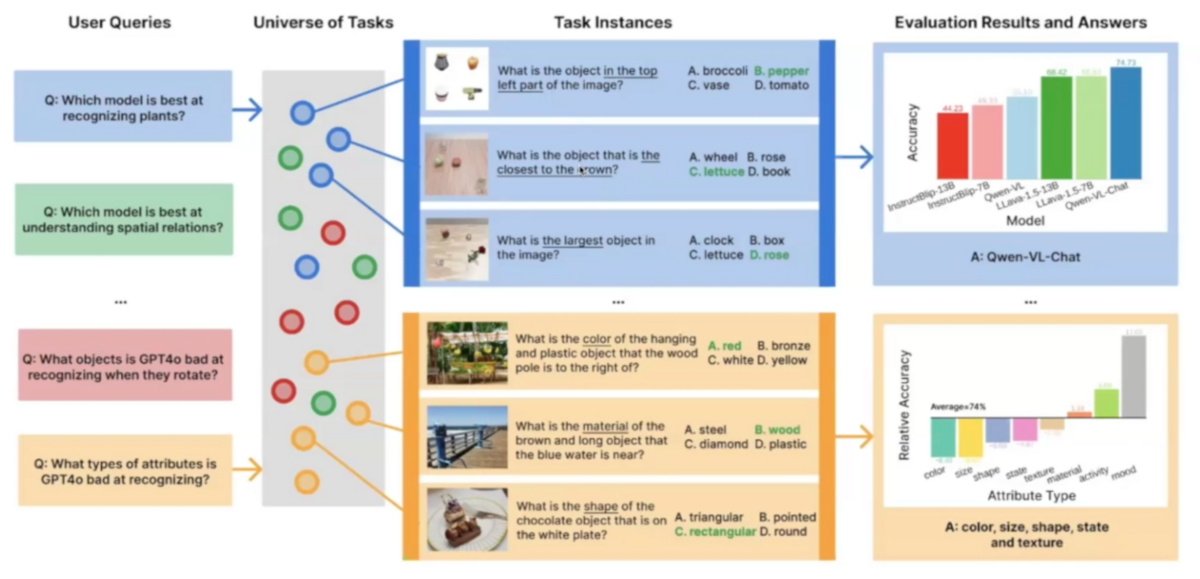

Task Me Anything: innovating multimodal model benchmarks

“Task Me Anything” empowers data scientists to generate bespoke benchmarks to assess and choose the right multimodal model for their needs.

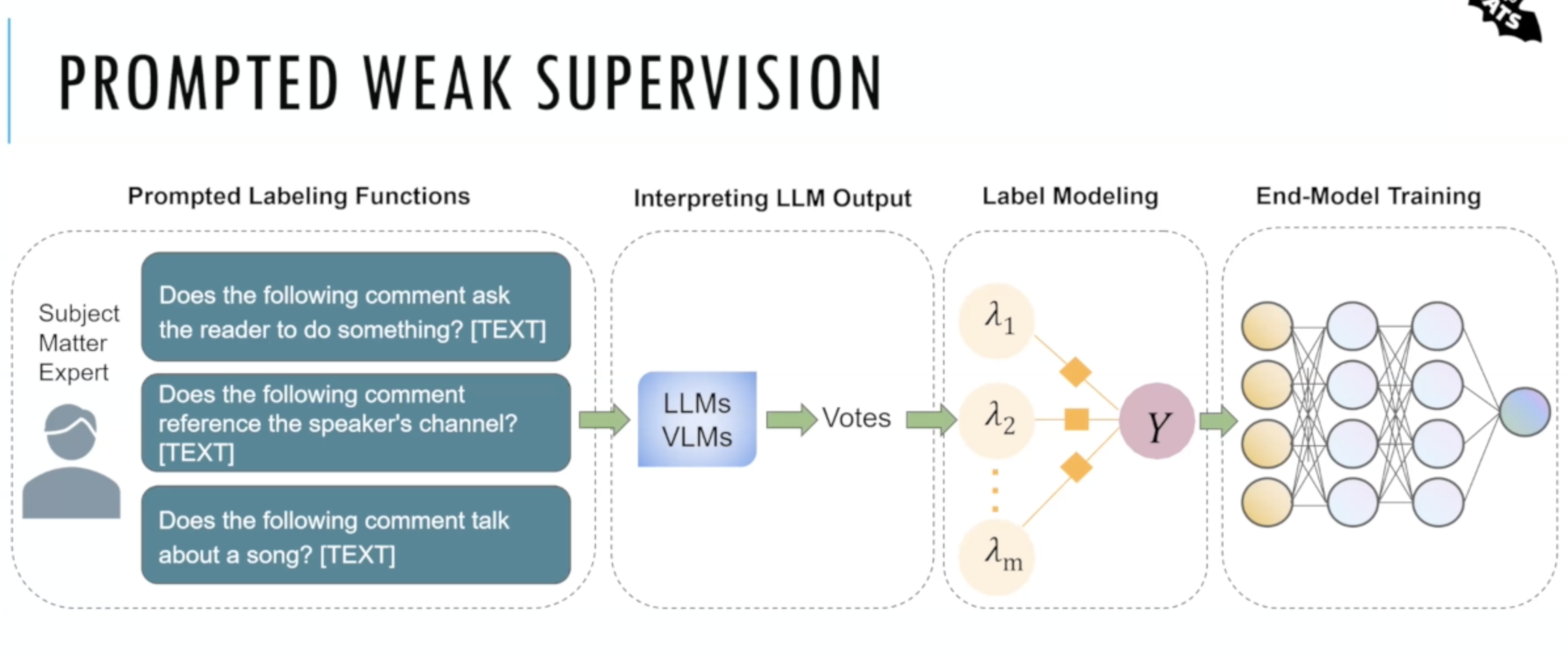

Alfred: Data labeling with foundation models and weak supervision

Introducing Alfred: an open-source tool for combining foundation models with weak supervision for faster development of academic data sets.

RAG: LLM performance boost with retrieval-augmented generation

Retrieval-augmented generation (RAG) enables LLMs to produce more accurate responses by finding and injecting relevant context. Learn how.

Call center AI for customer experience management: a case study

How one large financial institution used call center AI to inform customer experience management with real-time data.

New GenAI features, data annotation: Snorkel Flow 2024.R2

This release features new GenAI tools and Multi-Schema Annotation, as well as new enterprise security tools and an updated home page.

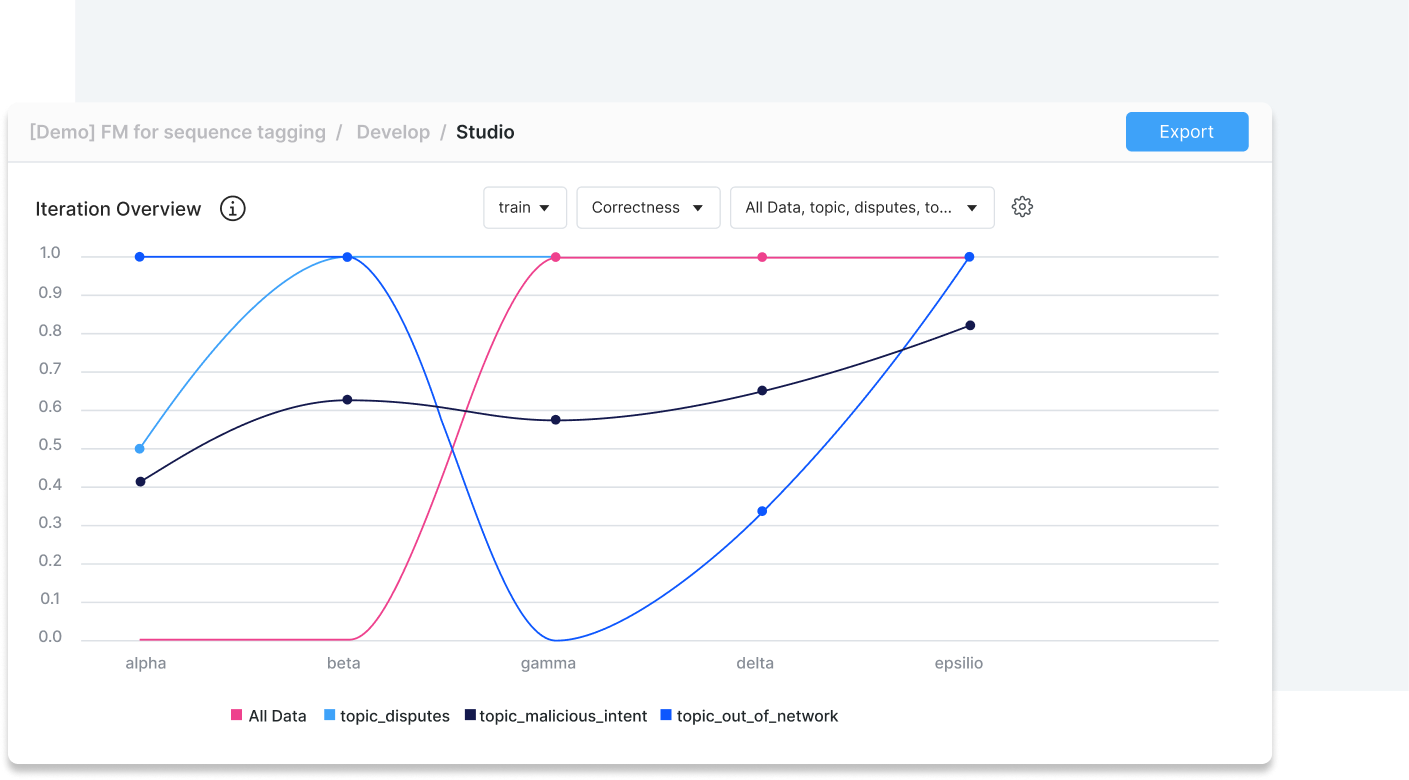

How data slices transform enterprise LLM evaluation

Enterprises must evaluate LLM performance for production deployment. Custom, automated eval + data slices present the best path to production.

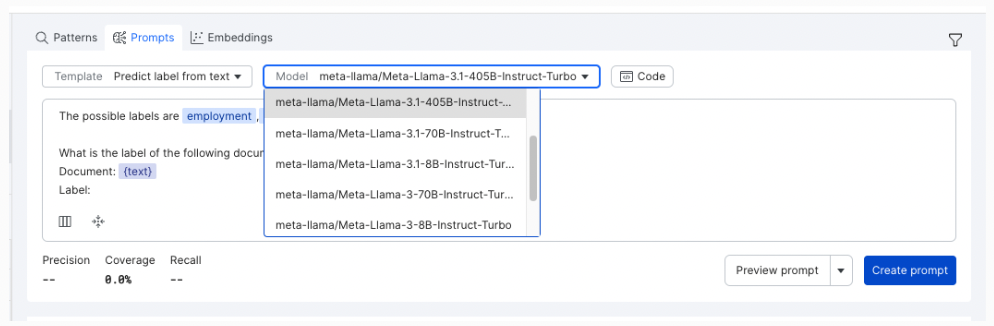

Meta’s Llama 3.1 405B is the new Mr. Miyagi, now what?

Meta’s Llama 3.1 405B, rivals GPT-4o in benchmarks, offering powerful AI capabilities. Despite high costs, it can enhance LLM adoption through fine-tuning, distillation, and as an AI judge.

Meta’s new Llama 3.1 models are here! Are you ready for it?

Meta released Llama 3 405B today, signaling a new era of open source AI. The model is ready to use on Snorkel Flow.