ScienceTalks with Abigail See. Diving into the misconceptions of AI, the challenges of natural language generation (NLG), and the path to large-scale NLG deployment

In this episode of Science Talks, Snorkel AI’s Braden Hancock chats with Abigail See, an expert natural language processing (NLP) researcher and educator from Stanford University. We discuss Abigail’s path into machine learning (ML), her previous work at Google, Microsoft, and Facebook, and her journey with Stanford.

This episode is part of the #ScienceTalks video series hosted by the Snorkel AI team. You can watch the episode here or directly on Youtube:

Below are highlights from the conversation, lightly edited for clarity:

How did you get into machine learning?

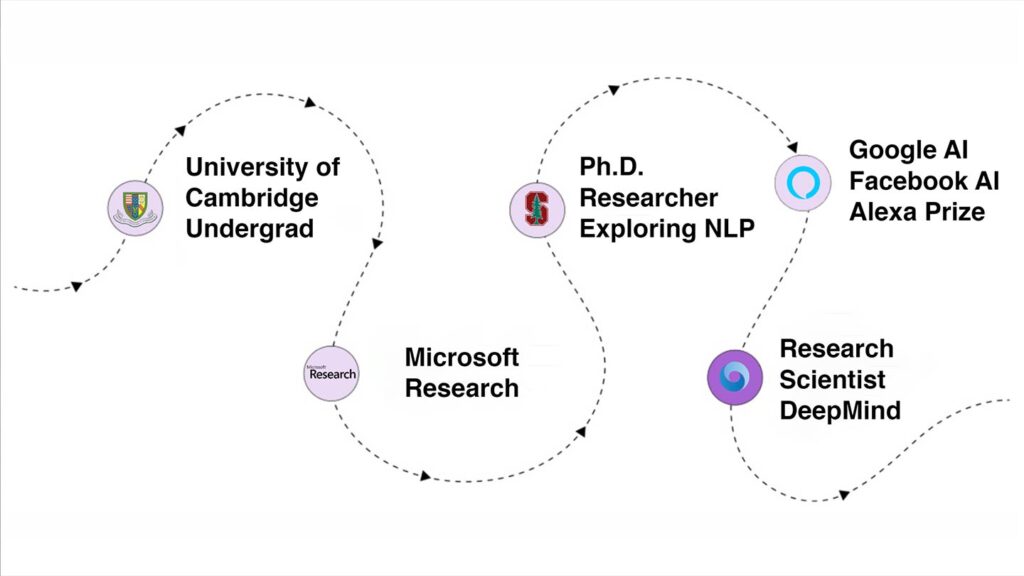

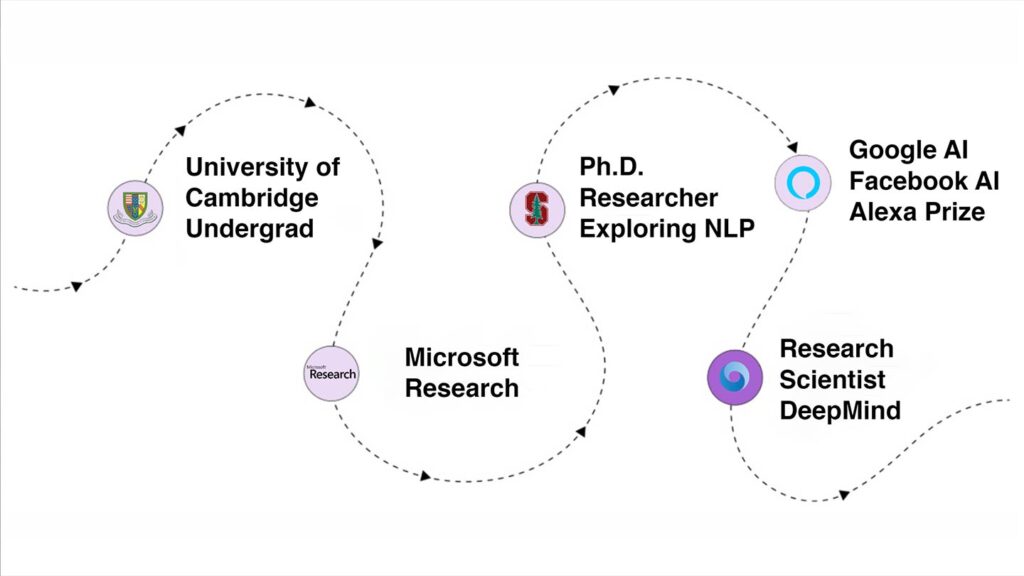

Abi: I studied maths during my undergrad and didn’t get into Computer Science until near the end of it. I did some internships at Microsoft Research in Cambridge, but those internships didn’t focus on machine learning. They were in other areas of Computer Science, on the more theoretical side. So I accidentally got into ML afterward when I applied for some Ph.D. programs in the US without honestly having a solid idea of what research in Computer Science I wanted to do. It was 2015, and the deep learning renaissance was just a few years in, so it was a very exciting time.

After some digging, Stanford seemed to me that it was probably the most exciting place to get started in my CS research career, where I worked a bit with the staff at the NLP group and also the Stanford AI Lab, and I ended up choosing NLP just because it seemed like I’ve always liked the language area, working with language and studying it. So that was an area of interest but also, the NLP group is just an outstanding group of people as well.

Braden: One of the places where many of our listeners will have seen you before is that you co-instructed the NLP with Deep Learning class at Stanford with Chris Manning and Richard Socher. From experience, I know that that class often has a huge number of participants from a wide variety of backgrounds, so you were probably exposed to quite a few different points of view on how people think about ML. I would love to hear, given the theme of our Science Talks:

What are some common misconceptions you’ve seen around what does and doesn’t make ML work in practice?

Abi: For the general public who mostly read about AI through the media or even through fiction (such as movies and so on), one of the primary misconceptions is that they often think of AI as artificial general intelligence (AGI). They tend to correlate AI to something that is even sentient or like a whole agent with whole intelligence, and they are not aware that most practical AI these days is narrow or applied to specific tasks. Also, during my teaching career, we had people who had a bit more background in ML or CS but still emphasized machine learning training models and making bigger and better architectures, thinking of it as the primary route to success to make ML work in practice.

There is sometimes a lack of awareness of how much everything else affects these machine learning systems’ effectiveness. For instance, choosing the data is incredibly important, and the last mile efforts, such as how exactly you deploy it and understanding your deployment scenario, and whether that’s a good fit with how you trained it, the training data, etc. Those processes, which are maybe a bit less glamorous than coming up with exciting new architectures, tend to be a bit overlooked.

Braden: That’s one of the interesting challenges of academia where you need to do controlled experiments, and so it’s easy—and in some ways, good practice—to lock parts of the pipeline and only modify others. And often the locked part is the dataset, because it can be so difficult to collect, and then you iterate on all the models. It makes for good comparison tables, good benchmarks in research, but doesn’t actually reflect what improves quality the most in practice—improving the data.

Speaking of interesting datasets, you’ve done a lot of great work in dialogue—in particular natural language generation (NLG), with pointer-generator networks at Google, controlling chatbots on different axes of expression at FAIR, and then most recently leading a Stanford team competing for the Alexa Prize.

What are the challenges of ML or NLP in natural language generation specifically?

Abi: This has been the focus of most of my Ph.D. work: neural text generation for different tasks. One of the reasons I chose this area is due to the extensiveness of the work it needed, not just in making more effective techniques but even with understanding what the problems with the standard techniques were, how can we improve on them, and how can we measure and evaluate those problems. One way to think about why text generation is so difficult is because it’s unlike, let’s say, classification, where you have some text that you want to classify into one of several labels, which is essentially one decision. Text generation can be seen as a whole sequence of decisions where if you’re generating some output text, that’s a sequence of words that you’re generating.

Speaking of the evaluation, that’s also a very big problem in the text-generation community given that it’s quite difficult to accurately evaluate whether the generated text is of high quality. Typically we can only really evaluate through human judgments, asking people to look at the text and judge whether it’s high quality or not, but that’s quite tough because you have to pay people to do that. It’s relatively slow and more expensive; you can’t do it as often or as quickly, which again forms a significant obstacle in the development and comparison of generation systems, so I’d say that’s probably another primary reason why generation is quite a tricky area.

Braden: That output space—not outputting one of a discrete set of labels but potentially having access to not just a monstrous vocabulary of individual words, but then the sequence, as you said—it means that that that space is just almost unfathomably large in terms of potential things that can come out of a generation system’s mouth. How about on the data side? With that loose and large of a potential output space and lots of parameters to train, neural models seem to have performed best in recent years, which of course are pretty data-hungry.

What have you seen be effective or problematic when getting the training datasets for those types of NLG tasks?

Abi: With dialogue, in particular, one of the big problems with training data is that most of the world’s dialogue data is private, especially if you’re interested in one-to-one conversations. There is a considerable amount of that data on private servers given all the messaging apps people use, which is rightfully private, so the academic community can’t train models on those. This barrier forms a problem because we often need to use whatever data is freely and openly available on the internet when we’re trying to train dialogue models, which tend to be quite different.

For instance, Reddit is often used as a source of a lot of dialogue-style training data, but there are significant differences between Reddit conversations and one-on-one conversations. On Reddit, you have branching threads where someone makes comments, and then many different people may respond to those, and it’s not a one-to-one interaction — it involves many people. So that’s very different from a lot of applications that we’re interested in, which tend to be one-to-one chatbots and one user. A problem with that is that some of the big neural generative dialogue systems that have been trained like Nina and DialoGPT do in part use Reddit training data, which in turn can cause a problem because it means that it’s trained on a style or conversation tone, and often a topicality that doesn’t necessarily match what you’re trying to do.

So, of course, the main approach to NLP these days is that you take a large pre-trained model that was pre-trained on what is hopefully a general and diverse pre-training set, and then you fine-tune your hyperparameters to more closely represent what you’re trying to achieve. But even then, if you’re trying to operate in the academic community and use openly available datasets, it’s quite hard to find this style of one-to-one conversations that you might want.

Braden: So you need a lot of data, and a lot of the data you’d like to use is private or semi-private. When you do find data, it’s not a perfect fit for your problem, with the downstream safety issues and tonal issues and all of that. So as you alluded to, we see this common approach where people will pre-train on various things and try to get increasingly refined as you have small amounts of data that’s a better and better fit for your task. I know you did some work at Facebook, I believe around those challenges, not just general architectures or general dataset collection but trying to have a little more control in terms of what your model at the end of the day learns or expresses itself along different axes.

How can you make some of those targeted corrections that address specific failure modes or air modes?

Abi: The work I did at Facebook on controlling text generation was quite rudimentary in that we were trying to apply different techniques to get a neural generative model to generate text with certain attributes that we wanted. The reason why I say it was quite rudimentary is because the attributes that we were trying to control were quite basic. So, for example, at least at the time, with the kinds of models we were using and the types of decoding algorithms we were using, there was quite a widespread problem with models writing generic, bland output or sometimes even repeating themselves.

Still, as an overall method to fix these problems, it probably isn’t the best and most principled way forward because it’s not really feasible. In the following years, these increasingly large GPT models have shown that scaling up in size and amount of training data and some other advancements that have been made have fixed some of these problems with bland responses, for example. So that doesn’t mean, though, that control is not still an interesting question. It certainly is, even with the best generative models we have now, which are able to generate quite long passages of text that seem quite convincing in fact, there are some studies 2 3 to show that humans are not able to distinguish them from human-written text in some cases.

Control is still as important as ever because we could generate such a large space of things. Your scope for things going wrong is so much larger. In fact, if you’re able to generate even longer and more convincing texts, now we have to worry even more about things like fake news and how these things could be used. So there’s a great deal of interest in the scientific community now about looking at how we can enact different kinds of restrictions on the output of these generation models.

So maybe a concrete example that’s probably more achievable soon would be factual accuracy—sticking to what is grounded in some sort of document. So for instance, in summarization or abstractive question answering, you’re writing new text. Still, it needs to be factually grounded in some source document. One big problem with current neural models is that they are so good at paraphrasing and using the knowledge that they’ve learned from pre-training that sometimes they hallucinate, as we would say, meaning they write things that aren’t bound by the source document but which they’ve just made up as being plausible in general. So this is a big problem and probably is the main thing that stands in the way of neural generative models being used in these applications. This area of research looks at how you can control them to ensure that we can find everything they generate in something mentioned in the source document.

When do you think humans will be comfortable with these generative models being part of day-to-day life?

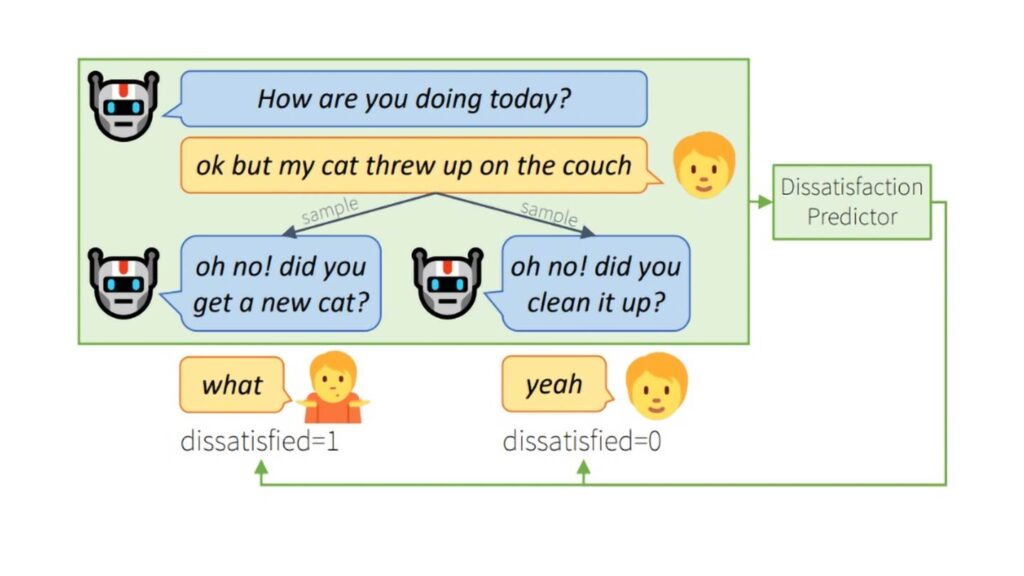

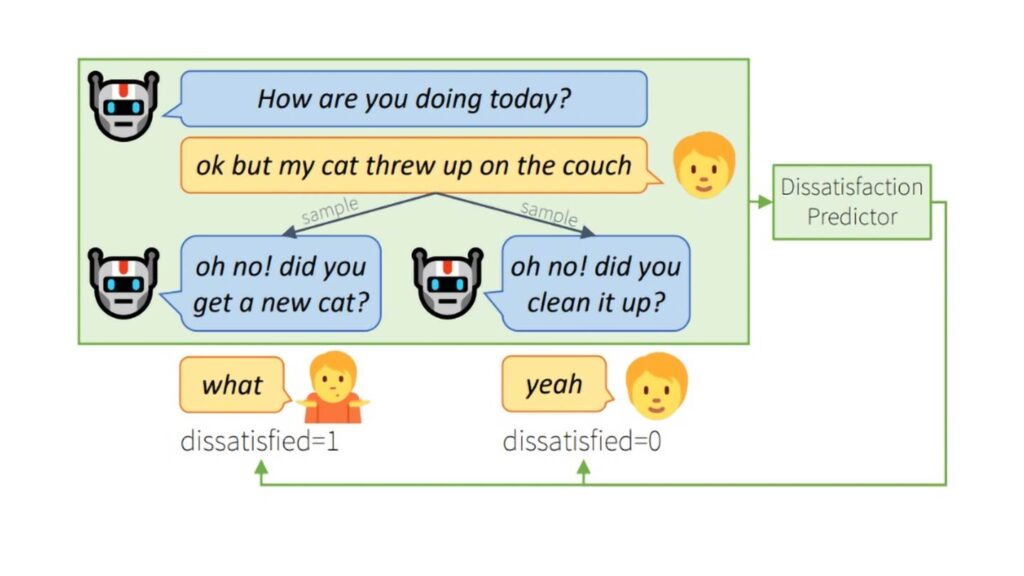

Abi: That’s a really important and significant question, so there’s a lot of different parts to this. Suppose you mentioned how people react to these models, and that in itself is something that’s evolving. For example, in our work for the Alexa Prize, one of the things we looked at was how people react to neuro-generative models when they’re chatting with them; in particular, what kinds of mistakes the models make and how does that interact with how people express whether they think the conversation is going well or not. We found some interesting things about how different users had quite varying expectations for the conversations, given that these social bots are not yet a familiar part of life for US-based users who were talking to them. Those users haven’t calibrated their expectations. We’re all very used to having virtual assistants like Siri and Alexa—we’ve calibrated our expectations for what we think they can do right now—but people have different expectations around what social bots should be able to do.

Next, we spoke earlier about factual correctness, which seems pretty indispensable. If you’re going to try to have a knowledge grounded conversation, there are also safety concerns. One of the problems that we faced during the Alexa Prize is that we blocked it off from talking about anything which was in any way controversial because we hadn’t adequately built its ability to talk about those things appropriately, so we just blocked it off. But, as a consequence, that can be quite disappointing to users sometimes, because they actually want to talk about these things because they’re very important to them as issues. It can be disappointing for users if we set ourselves up as “hey, this is our chatbot we can talk about anything its open domain,” but then say, “no, we can’t talk about that” so certainly in the future, we would want these chatbots to be able to talk about sensitive issues and divisive issues in a way that is appropriate knowledge grounded and yet doesn’t exclude any users from the conversation which is a really tall order, and we’d have to think carefully about how we want to do that as well.

Braden: Thank you so much for joining us, Abi. We’ll be back soon with more Science Talks on how to make AI practical.

Where to follow Abigail: Twitter, Github, Website.Please don’t forget to subscribe to our YouTube channel for future ScienceTalks or follow us on Twitter, Linkedin, Facebook, or Instagram.

1 “Neural Generation Of Open-Ended Text And Dialogue”. Abigail Elizabeth See. 2021. Purl.Stanford.Edu. https://purl.stanford.edu/hw190jq4736.

2 Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever. 2019. Language models are unsupervised multitask learners. OpenAI tech report.

3 Tom B Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. 2020. Language models are few-shot learners. arXiv preprint arXiv:2005.14165.

Recommended articles