New Snorkel benchmark leaderboards. See the results.

3 Impractical Assumptions About AI to Avoid

“Assume a spherical cow.” It’s a joke so well-known that its punchline has its own Wikipedia page. Various twists on the joke exist, but the gist is the same: a theoretical physicist attempts to solve a practical problem by assuming away all the hard parts. The joke bears a striking similarity to today’s artificial intelligence and machine learning technologies. Impractical ML assumptions are made every day in research, which limit its adoption. In the real world, these assumptions do not hold up. This is also why 87% of data science projects never get to production.

Impractical Assumptions Made by AI Practitioners

Let’s talk about these three impractical assumptions.

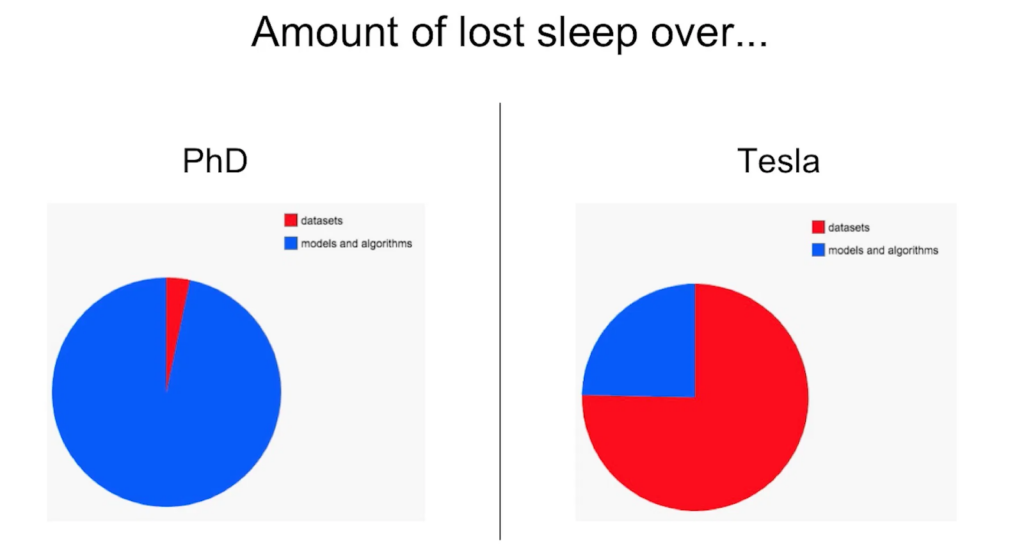

1. Assume a large, high-quality, task-specific training dataset

In research, ML projects are usually well-defined and use pre-existing datasets. Typically, data scientists download datasets and focus mostly on model building and downstream tasks. On the other hand, when teams build production applications, obtaining the training dataset is the bigger bottleneck, not training the model. This isn’t a task you do once—it’s a process you repeat as you figure out what to track, deal with noise, and craft a dataset that reflects your needs. To assume the existence of a readily available, high-quality dataset is to ignore one of the most crucial challenges for teams implementing ML solutions. This delays development as teams spend more and more time collecting and labeling data or hitting an accuracy ceiling with limited data.Andrej Karpathy shared a similar sentiment in a 2018 talk:

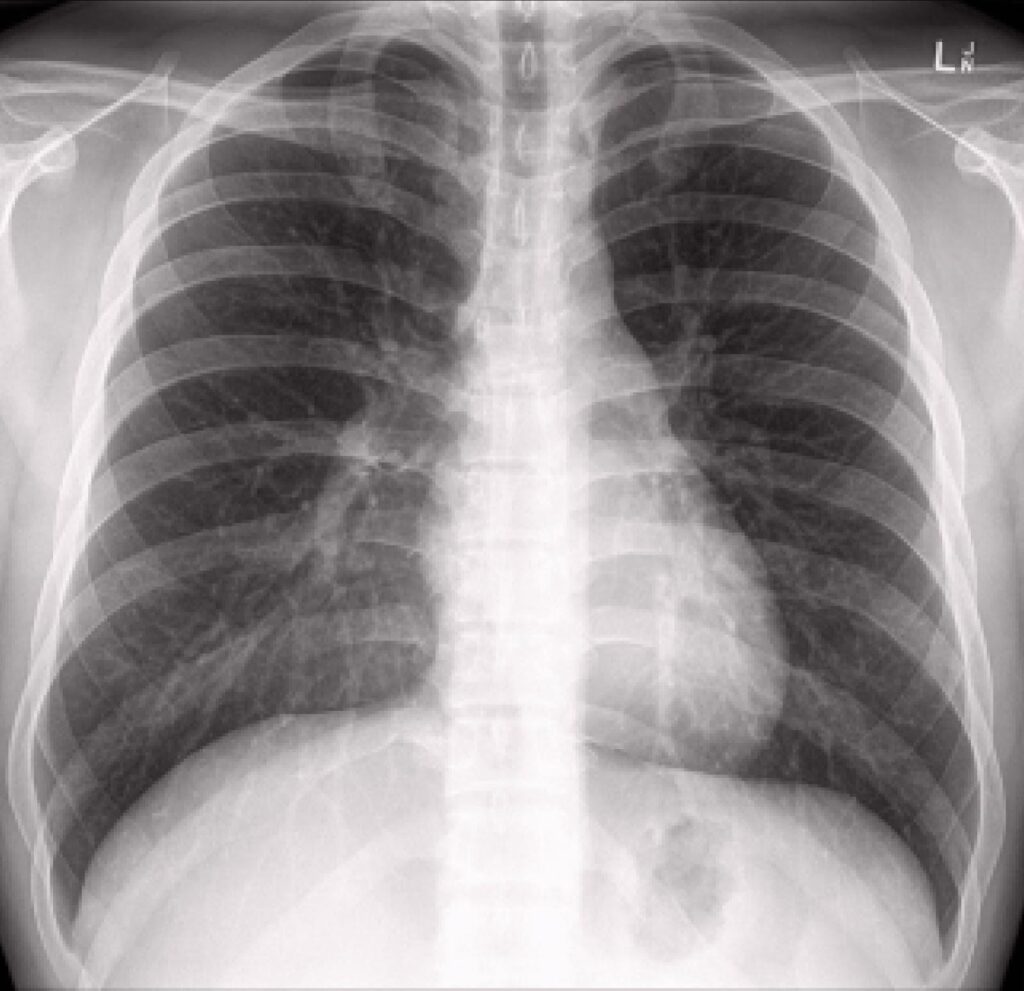

2. Assume an endless supply of qualified annotators

In research settings, datasets are often publicly available and rather simple to label. Moreover, problem definition is loose or simplified, so crowdsourced and untrained annotators can quickly and cheaply label data. In the real world, however, data is often complex and private, needing a team of in-house, subject matter experts to annotate. Such qualified annotators are more difficult to come by and expensive to employ. Annotator availability is often limited by one or more of the following factors:Expertise: Medical records, legal documents, and other types of specialized or nuanced data may require data annotation from skilled experts whose time is both limited and costly.

Privacy: Financial statements or proprietary data cannot be labeled by crowdsourcing the annotation process to online workers; often, all labeling must be done by a small group of individuals with the proper credentials or clearances.

Latency: Bugs and issues that arise in ML applications that are enterprise-relevant need to be addressed as quickly as possible, but manual re-labeling of training datasets by pools of annotators may delay progress by many days or weeks.

3. Assume a static test distribution

In the real world, things change. To assume a static test distribution is to ignore changes in data, data environments, and organizational needs.Semantic Drift: The words people use, the topics people talk about, and the types of documents that flow through an organization all change over time. This means that training labels have a limited shelf life and won’t always be as relevant as they were the moment they were collected.Evolving Needs: Sometimes needs change due to adjustments in policies or processes; other times, they change because you’ve become more familiar with your problem requirements and realized something was previously missing. When your problem specification changes, both training data, and test data need to be updated to train an up-to-date model and get an accurate picture of how it will perform in the real world.Changing Schemas: Adjustments in downstream workflows or subsequent data applications may necessitate adjustments to your dataset. For example, you may need to increase the number of classes in your dataset in order to feed downstream processes with greater granularity.

Snorkel Flow Makes AI Practical

Snorkel started as a research project out of the Stanford AI lab. As my colleagues and I collaborated with dozens of leaders in the industry, we frequently saw teams who felt the uncomfortable consequences of these impractical assumptions. Their AI application development processes were slowed significantly by the effort required to obtain, manage, and label training data. We saw this data bottleneck, and we believed that with the right approach, we could solve it.With Snorkel, domain experts and data scientists provide labeling functions instead of individuals, and Snorkel Flow automatically combines these varied sources to produce high-quality labels at a massive scale. As a result, ML practitioners now can generate large, high-quality, task-specific training datasets, and in much less time with much lower cost. Because the inputs experts provide are much higher level (e.g., tens of labeling functions instead of tens of thousands of individual labels), full state-of-the-art applications can be reasonably supervised by a small number of qualified annotators—as few as one or two. And because the process for generating new labels is programmatic in nature, it’s easy to update training labels in minutes instead of days or weeks when needs evolve, or distributions shift.Advances in AI technology promise tremendous gains for a wide variety of industries, but too often, products developed in research settings fail to account for crucial bottlenecks that slow real-world progress. Snorkel Flow makes AI practical by offering an end-to-end platform that addresses these classic limitations head-on.Request a demo to learn more about how Snorkel Flow can accelerate AI development for your organization.

Braden is a co-founder and Head of Technology at Snorkel AI. Before Snorkel, Braden spent four years developing new programmatic approaches for efficiently labeling, augmenting, and structuring training data with the Stanford AI Lab, Facebook, and Google. Prior to that, he performed NLP and ML research at Johns Hopkins University and MIT Lincoln Laboratory and earned a B.S. in Mechanical Engineering from Brigham Young University.