Snorkel Flow

The fast path to production for enterprise AI

Unblock strategic AI initiatives

Deliver accurate models for production

Adapt models to specialized tasks

What is AI data development?

Labeled data is required to train highly accurate AI/ML models for specialized, domain-specific tasks. However, manual data labeling with human annotation is slow, expensive, and often blocks enterprise AI projects on day one.

AI data development eliminates this bottleneck by streamlining collaboration between data scientists and SMEs via a unified platform for capturing domain knowledge and applying it to enterprise data, empowering data scientists to label entire datasets with the click of a button rather than requiring a team of SMEs to hand label each data point.

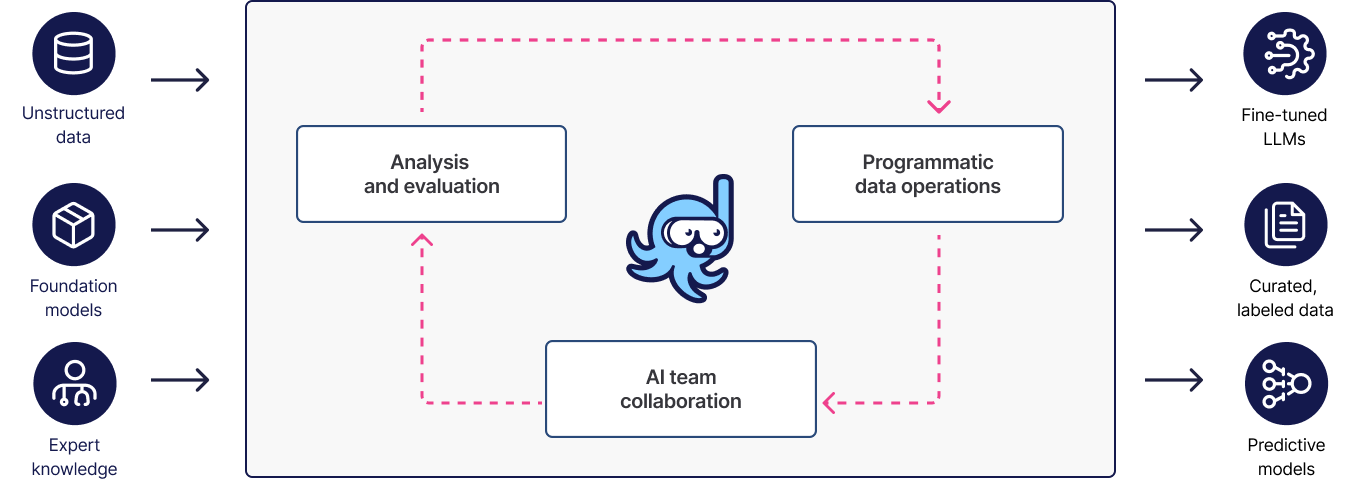

The Snorkel Flow AI data development platform

Snorkel Flow provides data scientists and subject matter experts with a collaborative platform for capturing domain knowledge, using it to label entire datasets or generate synthetic ones, and to quickly iterate on training data and model development via built-in guided error analysis and model evaluation.

Unblock AI initiatives

AI/ML teams should never be blocked due to missing or low-quality training data. Nor should data scientists, ML engineers, and SMEs be required to spend valuable time on manual data labeling.

Empower data scientists to curate high-quality training data in days rather than months

Take advantage of SME-in-the-loop to improve quality without the need for manual data labeling

Deploy AI/AML models which demonstrate higher accuracy and meet production requirements

Ditch the spreadsheets, and deliver production models faster

Jumpstart data labeling with LLMs

Capture and apply domain knowledge

Fine-tune models and RAG pipelines

Curate training data and fine-tune embedding models and LLMs as well as extract document metadata for enhanced retrieval.

Evaluate in-domain model accuracy

Deliver accurate models to production

Perform LLM evaluations based on SME domain knowledge and feedback to identify where models are failing to generate accurate responses.

Review model-guided error analysis results to improve label accuracy by discovering errors, conflicts, and low confidence levels.

Incorporate SME feedback by taking advantage of collaborative features such as ground truth annotation, tagging, and comments.

Iterate on training data by adding or modifying SME domain knowledge as needed, and monitoring diversity and coverage to avoid over/underfitting.

Adapt models to specialized tasks

Foundation models have become extremely capable, but they lack the domain knowledge needed to perform specialized tasks within the enterprise. However, specialized models can be derived from them, combining their inherent natural language and reasoning capabilities with enterprise data, corporate policies, and industry standards.

Training

Fine-tuning

Distillation

Interoperable with your AI stack

Data ingest

Model training

Production serving

Deploy models with MLflow or via AWS SageMaker, Google Vertex AI, and Databricks integration.

Infrastructure

Make data your differentiator and deliver specialized AI 10-100x faster

Data labeling

LLM fine-tuning

LLM evaluation

RAG optimization

Optimize RAG pipelines by fine-tuning embedding models and extracting document metadata to improve retrieval accuracy.

Ready to get started?

Take the next step and see how you can accelerate AI development by 100x.